Wireless regulatory compliance rarely fails because teams ignore the rules or cut corners. Instead, compliance is often treated as friction on the critical path to launch rather than as an integral part of product development. As a result, it is perceived as slowing momentum at precisely the moment when engineering teams and executives face the greatest pressure to deliver.

In most cases, failure happens because real-world regulatory expectations are more conservative, more judgment-driven, and less forgiving than manufacturers anticipate.

Products that fail certification often do so late in the process, after testing has been completed and schedules are committed. The result is unexpected re-testing, expanded scope, delayed approvals, and lost market windows. These outcomes are not anomalies. They follow repeatable patterns.

This article explains why wireless regulatory compliance breaks down in practice, even when teams believe they have done everything correctly.

Test Compliance Is Not the Same as Passing Certification

One of the most common causes of certification delays is assumption-based compliance. Many programs move forward believing that passing RF tests is sufficient, when in reality certification demands a higher level of confidence and evidence.

Typical assumptions include:

- Passing RF testing guarantees certification approval.

- Using a certified wireless module eliminates system-level compliance risk.

- Documentation only needs to be directionally accurate.

- Minor hardware or software changes do not affect compliance.

Case Study: Certified Module but Non-Compliant System

A startup developing a smart sensor assumed certification would be straightforward because it used a pre-certified Wi-Fi module. While this reduced initial testing the certification review revealed that the selected antenna was not covered under the module’s original certificate. The result was six weeks of delay for retesting, report updates, and resubmission, despite the team believing they had “done everything right.” Antenna selection with its specifications remains one of the most common and costly oversights in wireless certification.

Compliance Fails Where Assumptions Replace Evidence

A critical misunderstanding is treating RF testing and certification as the same decision gate. They are not. RF testing answers a narrow question: did the device meet defined limits under specific test conditions? Certification addresses a broader concern: can a regulator be confident the device will remain compliant across all declared modes, configurations, and foreseeable operating conditions? When that confidence is missing, certification stalls.

This gap explains why products that pass RF tests still encounter:

- Requests for additional technical justification.

- Expanded test scope.

- Retesting under new or combined configurations.

- Delayed approvals.

Regulators do not evaluate assumptions. They evaluate evidence, consistency, and risk. When assumptions surface during review, confidence in the entire submission erodes quickly. At that point, even clean test results may no longer be sufficient without added scrutiny. The failure is rarely the test itself, it is the gap between test results and regulatory confidence.

Case Study: Colocation Compliance Failure Caused by Untested Operating Modes

Under FCC Part 15, the device complied with the conducted and radiated emissions limits with each radio evaluated independently. During certification review, the reviewer raised concerns about simultaneous transmission and colocation of the Wi-Fi and LTE radios, operating modes that were declared but never exercised during testing. While each radio technology passed on its own, the system failed to demonstrate predictable behavior across all declared configurations, triggering additional testing and extended regulatory review.

Passing tests is necessary. Passing certification requires evidence that compliance is robust, repeatable, and defensible under real-world operating conditions.

Documentation Is a Primary Risk Surface, Not an Administrative Task

Documentation issues rarely cause outright rejection. Instead, they cause something more damaging: loss of trust.

Product questionnaires, block diagrams, operational descriptions, and declarations form the regulator’s mental model of the device. When those materials:

- Conflict with observed behavior

- Omit worst-case operating modes

- Oversimplify RF functionality

- Contain internal inconsistencies

At that point, the burden shifts to the manufacturer to prove compliance repeatedly. Review timelines reset, scope expands, and previously acceptable data is questioned. Delays of weeks or months are common, even when the underlying design is sound.

Case Study: Antenna Gain Error Causes Certification Delay

A manufacturer submitted a wireless product for compliance testing along with multiple antenna options of varying gains. All antenna models, gains, and part numbers were documented in the product questionnaire, these were reviewed prior to testing. As is common, the client requested testing at maximum allowable EIRP/ERP power levels. Testing was successfully completed, test reports finalized, and the full certification package submitted to an international regulator.

An issue surfaced during the regulatory review. One antenna had been documented with an incorrect gain value, significantly lower than the actual gain. This discrepancy implied that the device’s effective radiated power exceeded regulatory limits, triggering concern from the regulator.

As a result, the product required retesting, updated documentation, and resubmission of the certification package. This extended the program by an additional six weeks. A single incorrect antenna parameter turned an otherwise complete approval into a costly and avoidable delay.

Real-World RF Behavior Is Harder to Control Than Designs Suggest

Wireless products rarely operate in the controlled conditions assumed during early testing.

In practice:

- Multiple radios interact dynamically

- Firmware changes RF behavior over time

- Power states shift during normal use

- External RF fields introduce stress conditions

These factors can expose issues that were not visible during nominal testing. Immunity problems, coexistence failures, or unstable RF performance often surface only when devices are evaluated more conservatively during certification review.

Regulators are aware of this gap between lab conditions and field behavior. As a result, worst-case interpretation dominates regulatory decision-making, not best-case test results.

Case Study: When the Real World Breaks a “Compliant” Device

A smart home hub sailed through laboratory RF compliance testing with minimal issues, appearing fully ready for market deployment. The story changed the moment immunity testing began in a more realistic RF environment. Placed near typical in-home Wi-Fi access points and operating all radios simultaneously, the device began to degrade. Intermittent resets, dropped connections, and unexpected spurious emissions appeared – failures that were completely invisible during single-radio, isolated testing.

The root cause was radio collocation. When Wi-Fi, Bluetooth, and other technologies transmit concurrently, internal coupling and external RF exposure may combine to create emission spikes and performance instability. These issues would have surfaced immediately in real homes but were only uncovered through colocation stress testing under realistic RF conditions.

This is why testing beyond minimum regulatory configurations matters: activating all radios and exposing devices to real-world RF environments consistently reveals hidden failure modes long before customers or regulators do.

Change Management Quietly Invalidates Compliance

Another frequent point of failure occurs after initial compliance appears complete.

Changes such as:

- Firmware updates

- Component substitutions

- Antenna modifications

- Enclosure or mechanical revisions

These are often treated internally as low risk. From a regulatory perspective, they may not be.

Permissive change thresholds are intentionally conservative. When changes alter RF behavior, power distribution, or operating modes beyond what was originally approved, prior certifications may no longer apply. These issues often surface late, when regulators review updated submissions or detect inconsistencies.

Unexpected re-testing and re-certification at this stage are costly and disruptive.

Case Study: Software ‘Optimization’ Introduces Major Safety Implications

A client requested compliance testing for a 5 GHz Wi-Fi device operating in bands that require Dynamic Frequency Selection (DFS). DFS relies on complex software algorithms to detect radar signals, particularly those used by weather radar systems critical to aircraft safety. The device completed lab testing, passed verification at FCC labs, and eventually certified.

After deployment, the FCC received reports of rogue devices in the field. An audit revealed that these same units were effectively deaf to radar signatures. The root cause was traced to a software optimization made after certification. An ‘optimized’ DFS algorithm was implemented by a software engineer without follow-up verification testing. The result was that a previously certified device no longer met DFS requirements, forcing regulatory action, recall and fine.

This case illustrates how post-test software changes, even well-intentioned ones, can undermine compliance and introduce serious risks with real-world safety implications.

Why These Failures Repeat Across the Industry

These failure patterns persist because compliance is often treated as:

- A checklist

- A test milestone

- A late-stage validation activity

In reality, wireless regulatory compliance is a risk management discipline. It spans design decisions, documentation accuracy, test readiness, RF behavior under stress, and regulatory judgment.

When any one of these elements breaks down, the entire program is exposed to delay and rework.

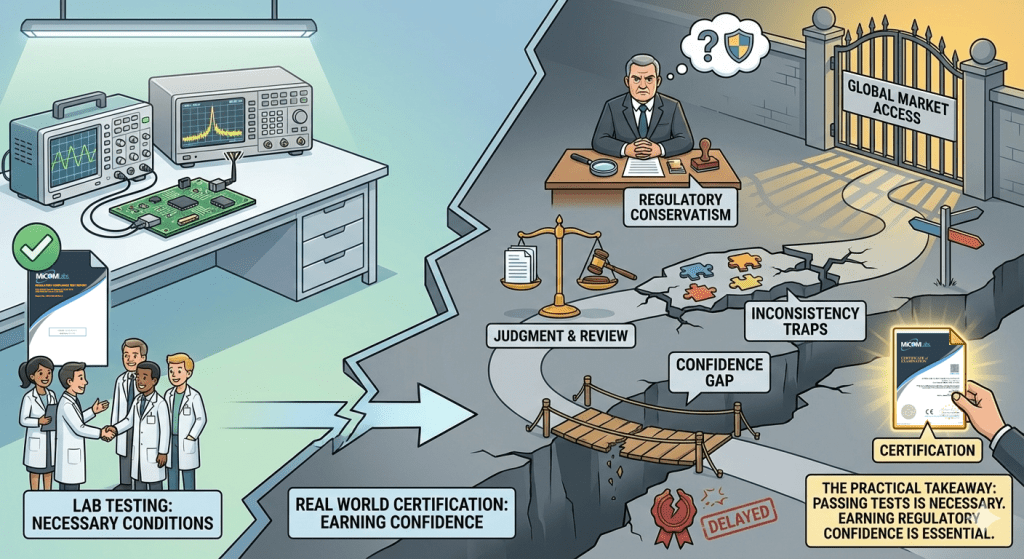

The Practical Takeaway

Wireless regulatory compliance fails in the real world not because teams ignore requirements, but because they underestimate:

- How conservative regulators must be

- How much judgment is applied during review

- How unforgiving inconsistencies become

- How quickly confidence can be lost

Passing tests is necessary.

Passing certification requires earning and maintaining regulatory confidence.

Understanding where compliance breaks down in practice is the first step to preventing delays, re-testing, and missed market opportunities.

For teams seeking procedural details on RF testing execution, certification workflow, and preparation, additional technical guidance is available through MiCOM Labs’ RF compliance resources.

Final Thoughts

The difference between successful certification and costly delay is rarely a single test result. It is almost always the cumulative effect of assumptions, inconsistencies, and underestimated risk.

That is where compliance breaks down in practice.